Introduction: From Proof-of-Concept to Enterprise Performance

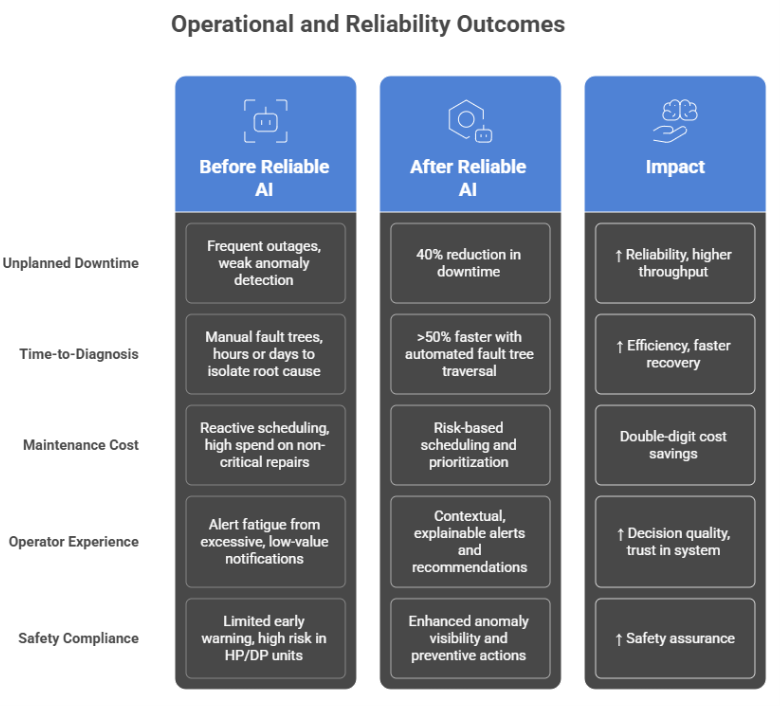

Across the Process Industries, Artificial Intelligence (AI) has evolved from an emerging idea to a proven driver of efficiency and insight. Leading organizations have demonstrated that AI can predict equipment failures, optimize energy use, and improve plant reliability. Yet for most, these achievements remain trapped within pilot programs, valuable in isolation but limited in scale.

The true opportunity lies in translating these local proofs into enterprise-wide performance. Doing so requires more than algorithms; it demands structure, governance, and a deep connection between data, operations, and financial outcomes. Only then can AI move from the lab to the control room and from experimentation to measurable enterprise value.

The Challenge:Why AI Pilots Stall Before Scale

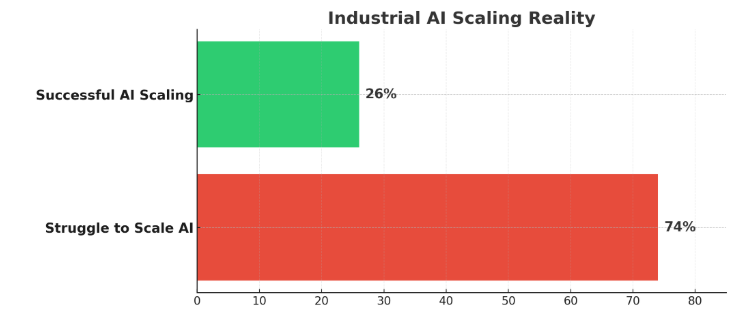

Despite significant investment, most industrial AI initiatives remain confined to the pilot stage. A recent study found that 74% of companies say they struggle to scale AI and turn pilots into full-value operations. This highlights a persistent gap between proof-of-concept success and enterprise-level impact — a challenge that continues to constrain digital transformation across the Process Industries.

Several structural challenges explain why:

Siloed data and infrastructure

Operational data (OT), maintenance systems, and enterprise IT remain fragmented, limiting visibility and preventing a unified operational view.

Limited interoperability

AI models developed in isolation often fail to connect seamlessly with plant control systems (DCS/APC) or data historians.

Undefined value metrics

Many pilots focus on model accuracy rather than business outcomes such as yield, energy efficiency, or uptime.

Lack of ownership or lack of a practical companywide AI implementation plan

Without clear governance or accountability, AI efforts remain in academic experiments rather than operational tools.

The outcome is predictable — dozens of isolated AI initiatives that look promising on paper but fail to move the EBIT needle in any meaningful way.

A Composite Scenario: From Isolated Success to Scalable Impact

These structural challenges—where operational, maintenance, and enterprise IT data remain trapped in isolated silos, and AI models are unable to interoperate with foundational systems like DCS, APC, and plant historians—surface in operations as fragmented intelligence, uneven performance, and a systemic inability to propagate successful pilots across the enterprise.

Recognizing this fragmentation, it is important to set a clear objective: to build a unified AI framework that could deliver reliability and profitability at scale.

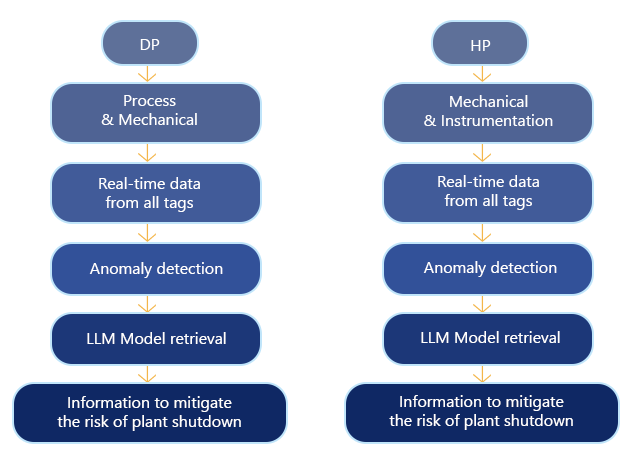

To support this shift, we use mcube™, TCG Digital’s Integrated AI Platform, which acts as a common intelligence layer across plants. At its core is an ontology-driven semantic layer that gives every data element—from sensor tags to lab results—a consistent, unambiguous meaning. By mapping all incoming data to a canonical vocabulary, mcube™ creates a unified knowledge graph that strengthens governance and ensures AI models operate on trusted, context-rich information.

Building on this semantic foundation, mcube™ serves as an autonomous AI fabric that layer intelligence over existing systems without requiring rip-and-replace modernization. It continuously integrates and contextualizes data from DCS/APC, historians, LIMS, ERP, EAM, and MIS, combining real-time and batch inputs into a single, actionable view of operations. Its data-source-agnostic design allows seamless connectivity with any IT or OT system, bridging gaps between operations, maintenance, and business functions.

mcube™ supports traditional machine learning, hybrid physics-ML models, generative AI, and agentic AI for decision support and autonomous action. Secure, standardized interfaces ensure that the platform enhances existing digital investments while progressively adding intelligence across sites. Deployable on cloud, on-premises, or hybrid environments, mcube™ provides scalable governance and democratized access to insights, enabling plants to transition from reactive operations to predictive and prescriptive performance—ultimately improving reliability, energy efficiency, and profitability.

Evolving Metrics: From OEE to Financially Linked Performance Indicators

While unified data and interoperability address the technical barriers to scaling AI, success ultimately depends on measuring what truly drives enterprise value. OEE has long been the standard for plant performance, but it reflects equipment efficiency—not margin improvement, financial risk reduction, or EBIT contribution. In today’s environment of volatile energy costs, variable feedstocks, and increasing reliability demands, OEE offers only a partial view.

To scale AI beyond isolated pilots, organizations must shift toward EBIT-linked performance metrics that capture real financial impact. Metrics such as EBIT per unit of throughput, cost-to-serve by product grade, predictive reliability value, energy margin contribution, adaptability to market conditions, and carbon intensity per EBIT dollar reveal how operational decisions influence profitability and resilience.

Just as importantly, AI pilots must be evaluated against these financially grounded KPIs. Without this alignment, pilots may show technical improvement without demonstrating business value.

When plants measure outcomes through an EBIT-focused lens, AI moves from experimentation to a scalable driver of margin growth and operational excellence.

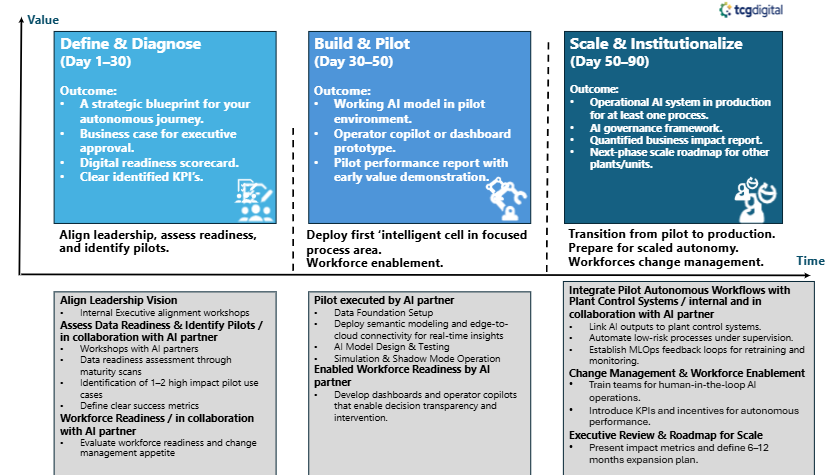

A Structured Path to Scalable AI: From Pilot to Autonomy in 90 Days

TCG Digital works alongside clients and their AI partners to connect strategy with execution:

-

Leadership Alignment:

Executive workshops to unite business vision with operational priorities.

-

Data & Pilot Readiness:

Joint maturity scans, pilot selection, and success metric definition.

-

Workforce Enablement

Training programs and copilots that empower operators with AI-assisted decision-making.

-

Integration & Governance

Linking pilot workflows with plant control systems under supervised automation, supported by MLOps frameworks for model monitoring and retraining.

-

Change Management

Preparing teams for human-in-the-loop autonomy through continuous coaching and KPI-linked incentives.

-

Executive Review:

Consolidating results, measuring impact, and setting up a 6–12 months roadmap for scaled deployment.

Conclusion: The Path Forward

Industrial AI has reached an inflection point. The real differentiator is the capability to scale with intent — bringing data, intelligence, and people together under a unified operational vision. Success comes from structured execution that connects technology with measurable business impact.

We help enterprises make this transformation real — embedding AI into the fabric of plant operations, control systems, and decision-making. The outcome is a smarter, more resilient operation that continuously learns, adapts, and optimizes performance.

The path forward is clear: move beyond pilots, scale with purpose, and let AI drive sustainable, enterprise-wide value.